User Experience as a Trust Signal: What AI Measures That Your Team Probably Doesn’t

Your website makes a first impression on human visitors in fractions of a second. Research on web...

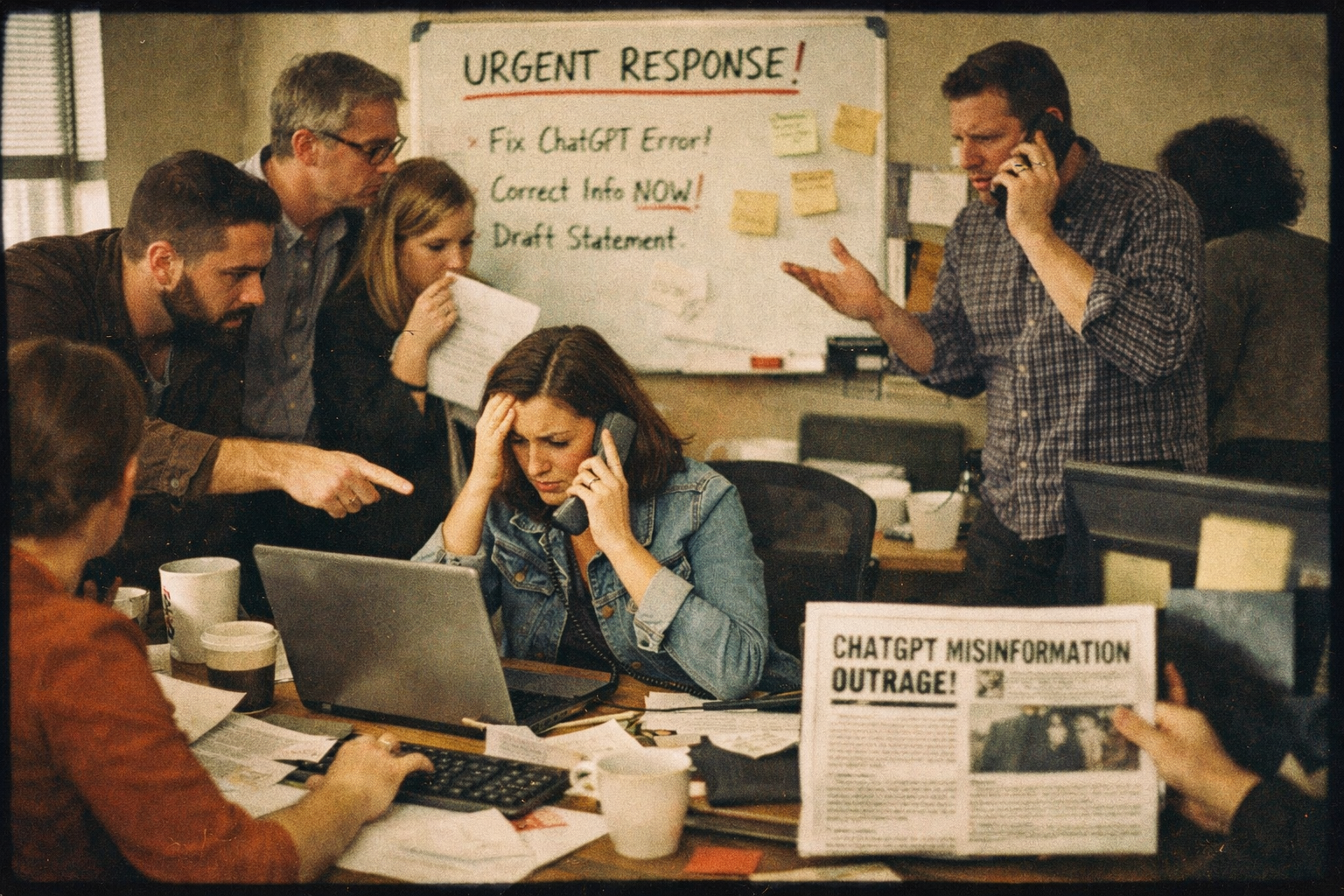

AI systems hallucinate. This is well-documented, widely covered, and by now broadly understood. The conversation about AI hallucination, though, tends to focus on factual errors about the world — wrong historical dates, invented statistics, fabricated citations. What gets far less attention is a more commercially consequential category of error: what happens when AI hallucinates about your brand specifically.

When AI describes your product incorrectly. When it confuses you with a competitor. When it surfaces a company narrative from three years ago that you’ve spent considerable effort moving past. When it tells a buyer that your platform doesn’t support a capability that you’ve offered for eighteen months. When it positions you as a mid-market player when you’ve moved decisively upmarket. These aren’t hypothetical scenarios. They’re happening to brands right now, in AI research sessions that most marketing teams can’t see, at a scale that most marketing teams haven’t begun to account for.

The buyers forming impressions based on these AI-generated characterizations are making real decisions based on them. They’re deciding which vendors make the consideration list and which ones don’t. They’re arriving at conversations with your sales team already carrying misconceptions about what you do and who you serve. They’re sometimes ruling you out entirely, for reasons that have nothing to do with your actual product or market position. That’s the trust crisis. And very few companies are preparing for it.

To understand how AI gets brands wrong, it helps to understand how AI builds its understanding of brands in the first place. AI systems learn about companies from the totality of content they’ve ingested during training: press coverage, analyst reports, review platform data, blog posts, social media discussions, forum threads, comparison articles, competitor mentions, industry news. They synthesize this content into a working model of what your company is, what it does, how it’s positioned, and how it’s perceived.

That synthesis is only as accurate and current as the source material. And the source material has several properties that create predictable distortions. First, it’s weighted toward the past. The bulk of content about most companies was written years ago, when the company may have been in a different stage, serving a different market, or dealing with circumstances that no longer apply. AI doesn’t automatically discount older information in favor of newer information — it weights by authority and link volume, not by recency alone.

Second, the source material is weighted toward the dramatic. A crisis, a controversy, a viral negative review, a public dispute with a customer or competitor — these events tend to generate extensive coverage and accumulate significant inbound links. The coverage of a difficult period may simply be more authoritative and more widely referenced than the coverage of the recovery from it. AI doesn’t have a sense that moving on is appropriate. It works with what’s there, weighted by authority, regardless of whether that picture is current or fair.

Third, in systems that use real-time retrieval, the content most likely to surface is the content that’s been most recently and most heavily indexed — which may or may not reflect your current positioning. A competitor comparison article written eighteen months ago that still ranks well in search results can feed directly into AI’s characterization of you today, regardless of how much has changed since it was written.

Category misclassification is one of the most common and commercially significant AI brand errors. AI might describe your company as competing in an adjacent category rather than your primary one — or group you with competitors you don’t actually consider close substitutes. This happens when your digital footprint sends ambiguous category signals: when you use language that overlaps with adjacent categories, when early coverage from a different positioning phase still dominates your AI source material, or when competitors you’re frequently mentioned alongside have pulled your category association in a direction that no longer fits.

Category misclassification matters because it affects which consideration sets you appear in. A buyer asking for recommendations in your actual category may not see you if AI has classified you as belonging to a slightly different one. A buyer asking for comparisons in the category AI has placed you in may be evaluating you against competitors you’re not actually competing with. Both outcomes can cost you deals before you even know the opportunity existed.

Outdated positioning narratives are the second major error category, and they’re particularly insidious for companies that have gone through significant changes. Rebrands, pivots, leadership transitions, acquisitions, recoveries from difficult periods — any of these can leave a gap between how AI characterizes your brand and how you actually present yourself today. The old narrative may simply be more extensively documented and more heavily cited than the new one. Generating enough fresh, authoritative coverage to shift the dominant signal takes time and sustained effort, and most companies underestimate how much of both it requires.

Capability gaps and misstatements are a third category of error that sales teams encounter most directly. AI may be telling buyers your product doesn’t support a use case you’ve supported for over a year, because the most current coverage of your product capabilities predates your recent development work. Or AI may be overstating what you do based on aspirational language in old marketing materials that made promises your product wasn’t quite ready to keep at the time. Either way, buyers arrive at discovery calls with miscalibrated expectations, and the first thing your sales team has to do is correct the record.

Competitor conflation is a subtler problem but a real one, particularly in crowded markets where multiple companies have similar names, overlapping product descriptions, or share a significant vocabulary. AI can blur the lines between competitors in ways that assign your competitor’s strengths to you, your competitor’s weaknesses to you, or your competitor’s customer profile to you. None of these are outcomes you’d choose, and none of them are under your direct control — but understanding that they can happen, and monitoring for them, is the prerequisite for addressing them.

The core reason most marketing teams are unprepared for AI brand misrepresentation is visibility. The AI sessions where your prospective buyers are forming impressions of your brand are essentially invisible to you. They leave no footprint in your website analytics, no trace in your CRM, no signal in your social monitoring tools. The first evidence that AI has been characterizing your brand inaccurately is often indirect: a sales call with a prospect who’s clearly confused about what you do, a deal that went to a competitor for reasons that don’t quite make sense, a pattern of discovery call objections that don’t map to your actual product.

Most businesses already think they are more trusted than they actually are — there’s a well-documented gap between how brands perceive their own credibility and how buyers actually experience it. AI brand misrepresentation creates an additional layer of this same gap. Most brands are more poorly represented in AI than they realize, and the gap between their perceived AI representation and their actual AI representation is often substantial. They’ve never looked, so they’ve never known what to fix.

This is compounded by the fact that AI representation can vary significantly between systems, between query phrasings, and over time as models are updated and retrieval sources change. A brand that looks reasonably well-represented in ChatGPT may be characterized quite differently in Perplexity, which relies more heavily on real-time retrieval. A query phrased as a category comparison may return different results than the same question phrased as a direct brand lookup. Testing your AI representation comprehensively requires running multiple queries across multiple systems — consistently, over time — which almost no marketing team has operationalized.

The starting point is straightforward: actually look. Set aside a few hours and run the queries your buyers would run across ChatGPT, Perplexity, Gemini, and Copilot, using fresh incognito sessions or asking colleagues outside your organization to run them on your behalf. Run category queries, competitive comparison queries, and direct brand queries. Document the results carefully — not just whether you appear, but exactly how you’re characterized.

Read the answers the way a skeptical buyer would. What category does AI place you in? What strengths does it mention? What limitations or concerns? How does it compare you to the competitors it groups you with? Is the product description accurate to your current capabilities? Is the customer profile accurate to your current ICP? Is any of the framing clearly drawn from outdated coverage or circumstances that no longer apply?

Build a gap list from what you find. Every inaccuracy, every outdated element, every mischaracterization is an item on a marketing priority list. Category misclassification means you need to generate more consistent, current content across owned and earned channels that clearly signals your actual category. Outdated narratives mean you need fresh authoritative coverage that displaces old signals. Capability gaps mean your most significant product developments need to be getting substantive coverage, not just press release pickups on low-authority sites.

The most effective response to AI brand misrepresentation is not reactive and it’s not surgical. You can’t simply ask AI to correct its characterization of you. You can’t submit a correction form. What you can do is invest consistently in generating the kind of fresh, authoritative, accurate third-party content that becomes the dominant signal AI draws on — displacing older, less favorable, or less accurate signals through sheer weight of authoritative coverage.

This means maintaining a consistent earned media program, not an episodic one. A burst of press coverage this quarter followed by silence for six months produces a spike and then a gap. AI draws on the accumulated weight of coverage over time, and gaps in that accumulation allow older signals to reassert dominance. The brands whose AI representation improves steadily over time are the ones generating consistent, sustained, high-quality earned media coverage that continuously refreshes the record AI draws on.

It means treating review platform management as an ongoing practice rather than a periodic initiative. Fresh, specific, positive reviews continuously signal to AI that your product is currently serving customers well — not just that it was doing so two or three years ago. The factors that make buyers trust reviews — recency, specificity, volume, response rate — are the same factors that determine whether your review profile reads to AI as strong current signal or faded historical data.

It means engaging the analyst community regularly enough that your brand appears in current analyst coverage, not just in reports from two years ago. And it means treating reputation management as proactive brand-building rather than crisis response — the consistent, forward-looking investment in positive signal generation that makes AI misrepresentation progressively less likely over time.

There’s no quick fix for entrenched AI brand misrepresentation. If the dominant signal in your category is a narrative that doesn’t serve you, correcting it takes months of consistent investment, not days. The depth and authority of the old signal determines how much new signal you need to generate to displace it, and for brands that have genuinely neglected their external validation presence, that investment can be substantial.

What makes the investment worthwhile — beyond the obvious benefit of accurate AI representation — is that the program that corrects AI misrepresentation is the same program that prevents it in the first place. Consistent earned media investment, proactive review cultivation, sustained analyst engagement, regular AI audits — these practices, maintained over time, produce a trust signal infrastructure so strong and so current that accurate, favorable AI representation becomes the default outcome rather than the exception. The goal isn’t to fix today’s misrepresentation and then stop. It’s to build the kind of brand presence that makes accurate representation self-sustaining.

The eight factors that make or break brand trust don’t shift overnight, in either direction. But brands that invest consistently in the right signals — responsiveness, transparency, authoritative coverage, genuine peer validation — find that their AI representation improves steadily over time as a natural byproduct of building a genuinely trustworthy brand. That’s the most durable correction available. And it starts with the audit that most companies haven’t done yet.

Scott is founder and CEO of Idea Grove, one of the most forward-looking public relations agencies in the United States. Idea Grove focuses on helping technology companies reach media and buyers, with clients ranging from venture-backed startups to Fortune 100 companies.

Your website makes a first impression on human visitors in fractions of a second. Research on web...

There is a conversation happening about your brand right now. Probably several of them. A...

Leave a Comment